Haiming GangI am a Senior Machine Learning Engineer at Apple working on multimodal LLM and 3D Scene Understanding. Prior to joining apple, I was a Research Engineer at the Honda Research Institute (HRI) USA, where I mainly worked on 3D Scene Understanding and Multi-agent interaction modeling related topics for Autonomous Driving Car. I also worked on the indoor mobile robot and manipulation. I have an MS in Mechatronics and Robotics from New York University in 2017, where I was advised by from Vikram Kapila. I obtained my BS in Mechanical Engineering from Shanghai University. I'm interested in robotics, computer vision and machine learning. Much of my research is about understanding the surrounding environment of the robot/self-driving car from multi-sensors (lidar, camera, gps/imu). GitHub / Google Scholar / LinkedIn / Projects / Robots |

|

Research |

|

UniGen-1.5: Enhancing Image Generation and Editing through Reward Unification in Reinforcement LearningRui Tian, Mingfei Gao, Haiming Gang, Jiasen Lu, Zhe Gan, Yinfei Yang, Zuxuan Wu, Afshin Dehghan Conference on Computer Vision and Pattern Recognition (CVPR), 2026 arxiv / Propose UniGen-1.5, a unified multimodal large language model (MLLM) for advanced image understanding, generation, and editing. Building on UniGen, it enhances the architecture and training pipeline to improve image understanding and generation while enabling strong image editing capabilities. |

|

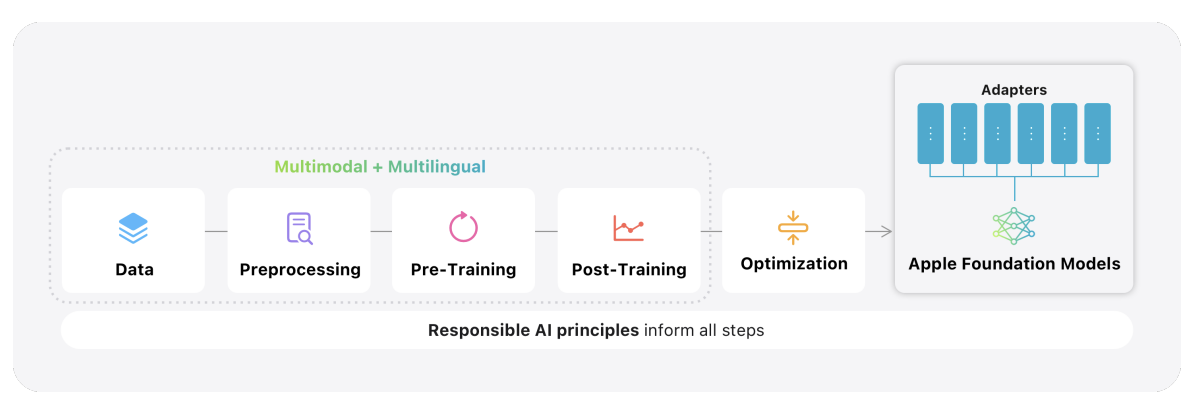

Apple Intelligence Foundation Language Models Tech Report 2025Tech report, 2025 tech report / Introduce two multilingual, multimodal foundation language models that power Apple Intelligence features across Apple devices and services. |

|

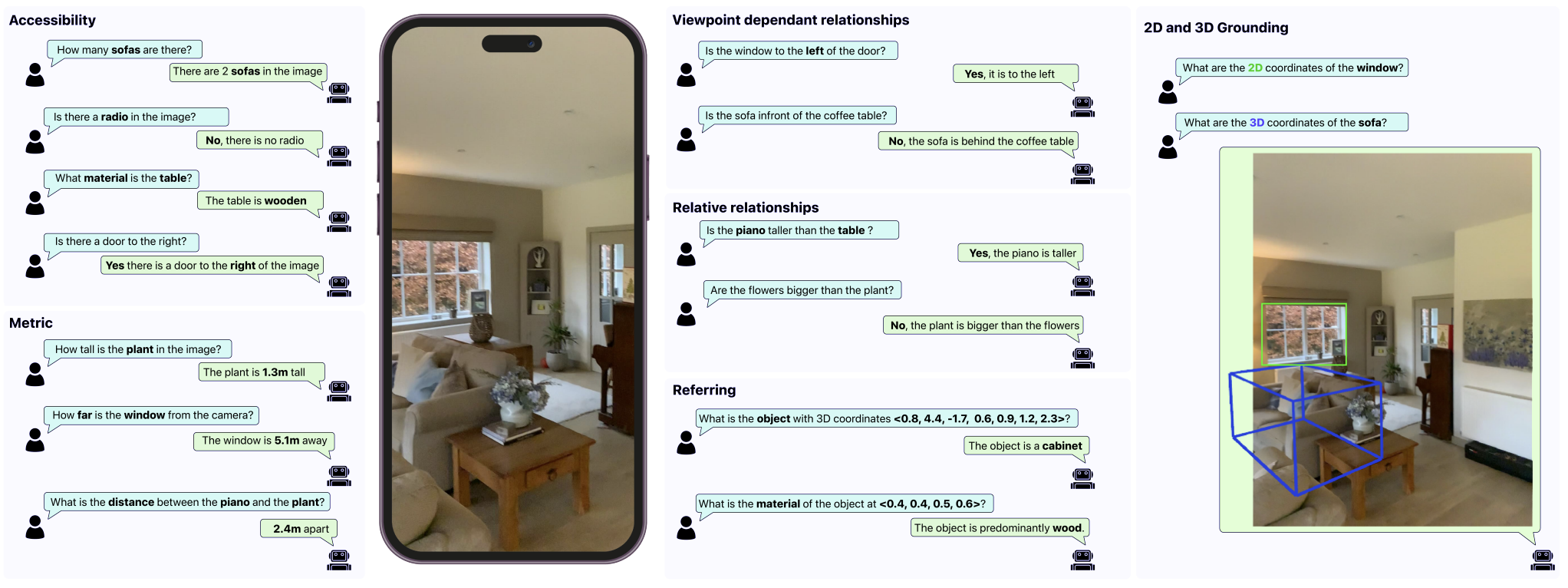

MM-Spatial: Exploring 3D Spatial Understanding in Multimodal LLMsErik Daxberger*, Nina Wenzel*, David Griffiths*, Haiming Gang, Justin Lazarow, Gefen Kohavi, Kai Kang, Marcin Eichner, Yinfei Yang, Afshin Dehghan, Peter Grasch International Conference on Computer Vision (ICCV), 2025 arxiv / Introduces a 3D scene dataset and benchmark (CA-VQA) for spatial tasks, enabling the MM-Spatial model to achieve state-of-the-art performance on 3D spatial understanding. Incorporating metric depth and multi-view inputs yields depth perception comparable to dedicated depth models. |

|

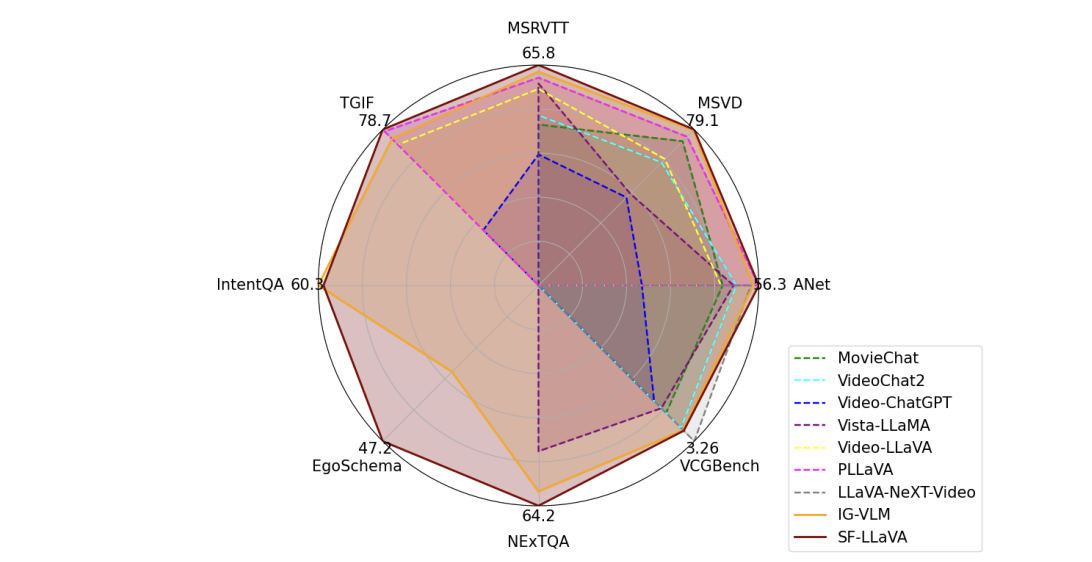

SlowFast-LLaVA: A Strong Training-Free Baseline for Video Large Language ModelsMingze Xu∗, Mingfei Gao∗, Zhe Gan, Hong-You Chen, Zhengfeng Lai, Haiming Gang, Kai Kang, Afshin Dehghan arXiv preprint, 2024 arxiv / Propose SlowFast-LLaVA (or SF-LLaVA for short), a training-free video large language model (LLM) that can jointly capture the detailed spatial semantics and long-range temporal context without exceeding the token budget of commonly used LLMs. |

|

Important Object Identification with Semi-Supervised Learning for Autonomous DrivingJiachen Li*, Haiming Gang*, Hengbo Ma, Masayoshi Tomizuka, Chiho Choi International Conference on Robotics and Automation (ICRA), 2022 arxiv / Propose a novel approach for important object identification in egocentric driving scenarios with relational reasoning on the objects in the scene. |

|

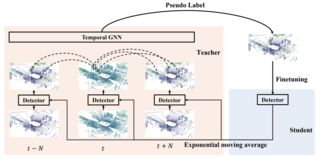

Semi-supervised 3D Object Detection via Temporal Graph Neural NetworksJianren Wang*, Haiming Gang*, Haiming Gang, Siddarth Ancha, Yi-Ting Chen, David Held International Conference on 3D Vision (3DV), 2021 arxiv / Propose leveraging large amounts of unlabeled point cloud videos by semi-supervised learning of 3D object detectors via temporal graph neural networks |

|

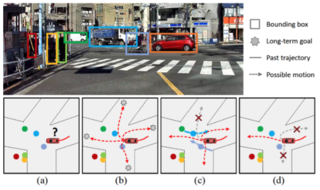

LOKI: Long Term and Key Intentions for Trajectory PredictionHarshayu Girase*, Haiming Gang*, Srikanth Malla, Jiachen Li, Akira Kanehara, Karttikeya Mangalam, Chiho Choi International Conference on Computer Vision (ICCV), 2021 arxiv / dataset / Propose LOKI (LOng term and Key Intentions), a novel large-scale dataset that is designed to tackle joint trajectory andintention prediction for heterogeneous traffic agents (pedestrians and vehicles) in an autonomous driving setting. |

|

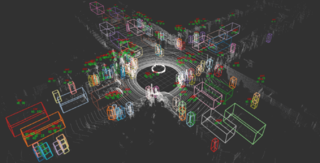

The H3D Dataset for Full-Surround 3D Multi-Object Detection and Tracking in Crowded Urban ScenesAbhishek Patil, Srikanth Malla, Haiming Gang, Yi-Ting Chen International Conference on Robotics and Automation (ICRA), 2019 arxiv / dataset / Present the Honda Research Institute 3D Dataset (H3D), a large-scale full-surround 3D multi-object detection and tracking dataset collected using a 3D LiDAR scanner. |

ProjectsThe projects that I have worked with |

|

Curious Minded MachinesHRI : CMM 2021-06-02 project link / The Curious Minded Machine project seeks to develop intelligent systems capable of learning continuously with a human-like sense of curiosity. |

|

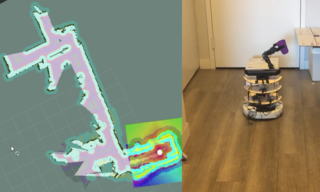

Autonomous Domestic Assistant RobotNYU : MS project 2017-05-08 The project developed a mobile robotic system combined with a manipulator, image processing and motion planning with mobile devices to assist human in an indoor environment. The project utilizes a Microsoft Kinect, three microcontrollers, a mobile phone, a mobile robot base and an arm robot. |

|

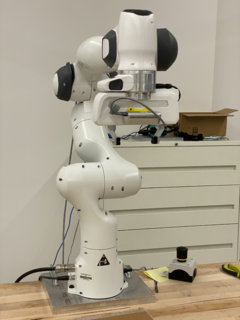

Multi-Manipulator Collaboration based on Object DetectionNYU : Robotic Gait and Manipulation 2017-05-05 Control the collaboration of multiple simple DOF manipulators for picking and placing tasks based on object recognition using Linemod provided by ORK (Object Recognition Kitchen) library with ROS. |

|

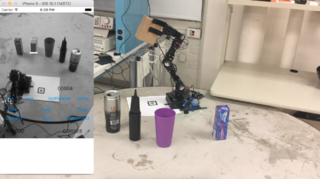

Haar Feature Object Recognition and ManipulationNYU : MS project 2016-12-20 This project developed an image processing-based object recognition and manipulation system with a 5-DOF smart robotic arm through a smartphone interface considering human user’s intent sensing. |

|

Braille DisplayNYU : Advanced Mechatronics 2016-12-20 This project developed a device that converted the alphabet characters to the braille display system to help people who are visually impaired read the text. |

|

TOT BOTNYU : Robots for Disability 2016-12-19 We developed “Tot Bot” robot, which enables a kid to see its surrounding on the tablet screen and then reach a selected point by a touch on the screen. |

|

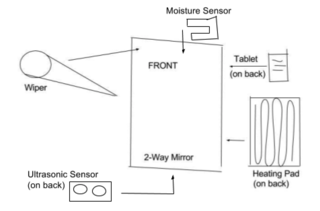

Smart Mirror: Automatic Defog and DisplayNYU : Mechatronics 2016-05-01 This project developed a Smart Mirror which automatically defogs and wipes moisture from its surface as well as displaying date, time, and a news headline. |

RobotsThe robots that I have worked with |

Franka Emika Panda

Mayfield Kuri

Fetch Mobile Manipulator

AssistantBot (Made by me)

Pioneer P3-DX

|

|

|

|

Design and source code from Leonid Keselman's website |